Next to gasoline consumption, electricity usage comprises the largest energy expenditure for households. Most recent data from the U.S. Energy Information Administration (EIA) show the average home uses just under 11,000 kilowatthours (kWh) annually. Though people are using more energy-efficient appliances or replacing older appliances with these, their electricity bills continue to spike higher. Much of this can be explained by looking at how their local utility company acquires electricity. Prices are not arbitrarily set. In many regions of the United States, energy pricing is regulated; and changes in electric rates must be approved, based on the input costs to generate the power. One of the biggest drivers of cost comes from the selection of the primary energy source used for generating that electricity.

An energy source is calculated in units of Btu (British thermal unit)

To understand cost, we must first understand how energy is measured. And that measurement is done using the British thermal unit (Btu). This unit indicates the amount of heat needed to raise the temperature of water by 1 degree Fahrenheit. The higher the number, the better that source is in providing power. Keep in mind the Btu value only informs about the power efficiency and does not factor-in cost.

Only several primary energy sources can meet our enormous demand.

According to the EIA, the U.S. consumed almost 93 quadrillion Btu from primary sources in 2020. This number alone reiterates the importance of having sufficient supply. Even if an energy source scores a high Btu, it cannot be relied on if the supplies are inadequate. Diving deeper into the country’s energy demands, we find electric power accounts for almost 36 quadrillion Btu of demand. And not surprisingly, almost all electricity is generated from primary sources – which include petroleum, natural gas, renewable energy, coal, and nuclear power.

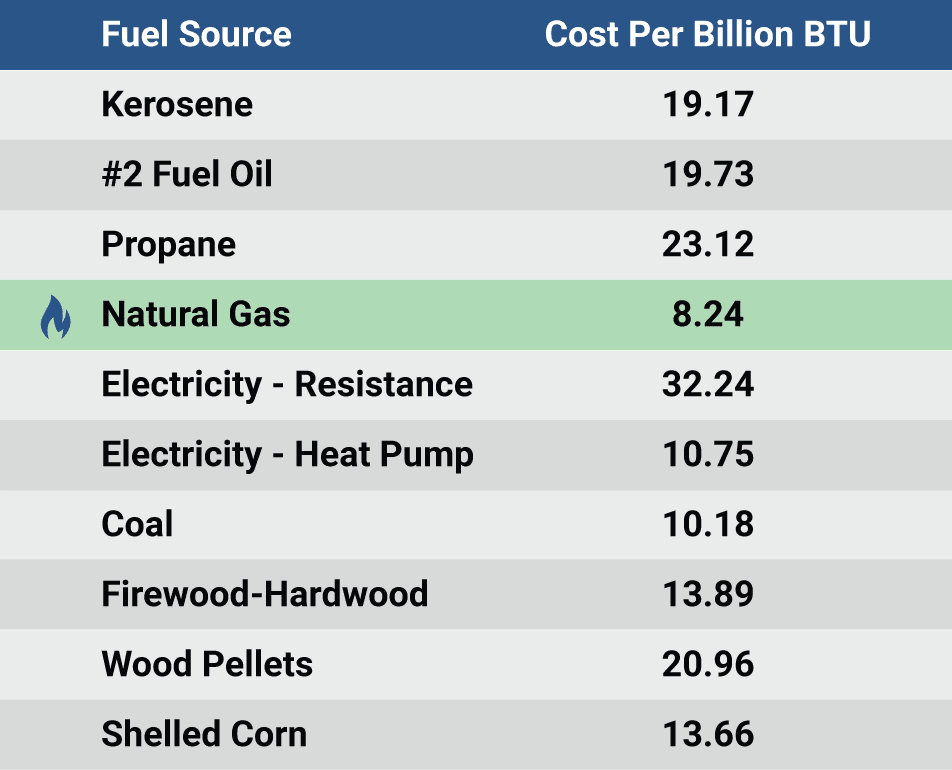

Data support Natural Gas as the ideal choice for everyday consumers

Consumers are limited in any purchase by costs. No matter how superior one option is over the others, the buyer must contend with affordability. Additionally, to properly evaluate energy sources, the cost of each option should be presented in units of billion BTU. Oklahoma State University published a paper, titled “True Cost of Energy Comparisons – Apples to Apples”, providing this very information. When considering all variables, including energy content, heat conversion efficiency, supply availability and cost, the clear winner in this contest is NATURAL GAS. Not only is this energy source great for the pocketbook of everyday households, it is also one of the cleanest for the environment. So, let's keep moving natural gas through the pipelines to lighten the load of energy costs for everyone!